This document gives an example of testing showing how PrimoCache improves the disk write speed by using its Defer-Write feature. When Defer-Write is enabled, PrimoCache executes write requests and store write-data on the cache first. Therefore write requests can be responded very fast, greatly improving the disk write speed.

Testing Platform

Motherboard: ASUS P6T SE (Intel X58 + ICH10R)

CPU: Intel Core i7-950 @ 3.06GHz

RAM: 20GB (4GB x 5, DDR3-1600)

Hard disk: Seagate ST31000528AS(SATA 3Gb/s, 1TB, 7200RPM, 32MB Buffer)

OS: Microsoft Windows 7 Ultimate (x64)

Benchmark Tool: Anvil's Storage Utilities 1.1.0

PrimoCache: version 1.0.1

Disk Write Speed without PrimoCache

Run Anvil's Storage Utilities on a volume to measure the disk write speed without using PrimoCache. Use this result as a baseline to compare the differences in performances when PrimoCache is used. As the figure below shows, the disk write score in this example is 87.72.

Disk Write Speed with PrimoCache

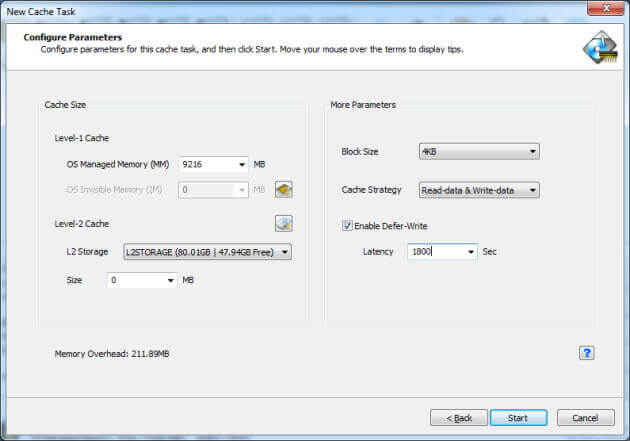

Create a cache task onto the same volume with the following cache configuration.

Level-1 Cache: 9216MB (larger than Test Size)

Block Size: 4KB

Strategy: Read-data & Write-data

Defer-Write: Latency 1800s (long enough to avoid writing to disk during testings)

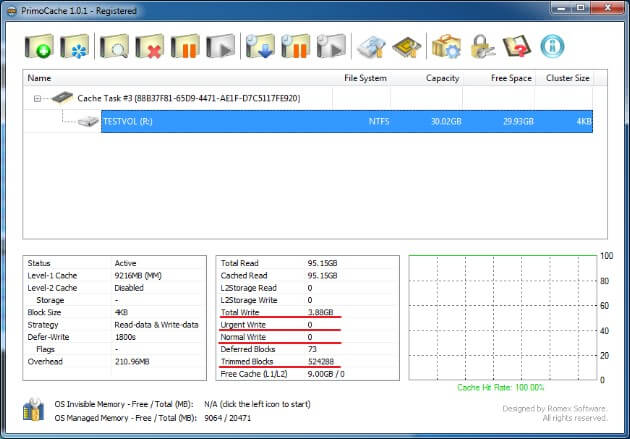

Run Anvil's Storage Utilities again to measure the disk write speed with level-1 cache and Defer-Write enabled. As you can see in the results below, now the disk write score surprisingly increases to 21,515.00, almost 250 times higher than the baseline.

As the statistics below shows, the benchmark tool issued 3.88GB write-data to disk. However all of these write-data were stored in the cache and none of them was synchronized from the cache to disk, as you can see that both Urgent Write and Normal Write are 0. That's why there is a huge improvement on the disk write speed.

Of course, the above benchmark testing only shows the theoretical value at which disk write speed reaches its maximum. In real-world usage, usually there is not enough memory to store all write-data. Besides, because of the risk that data may be lost on system crashes or power failures, usually latency is set to a low value. Higher latency brings better performance, but also comes with higher risk.